chan

channel is a special data structure and a typical representative of Go's CSP philosophy. The core of CSP philosophy is that processes exchange data through message communication. Correspondingly, through channel we can easily communicate between goroutines.

import "fmt"

func main() {

done := make(chan struct{})

go func() {

// do something

done <- struct{}{}

}()

<-done

fmt.Println("finished")

}Besides communication, through channel we can also implement goroutine synchronization operations. In systems requiring concurrency, channel is almost ubiquitous. To better understand how channel works, we'll introduce its principles below.

Structure

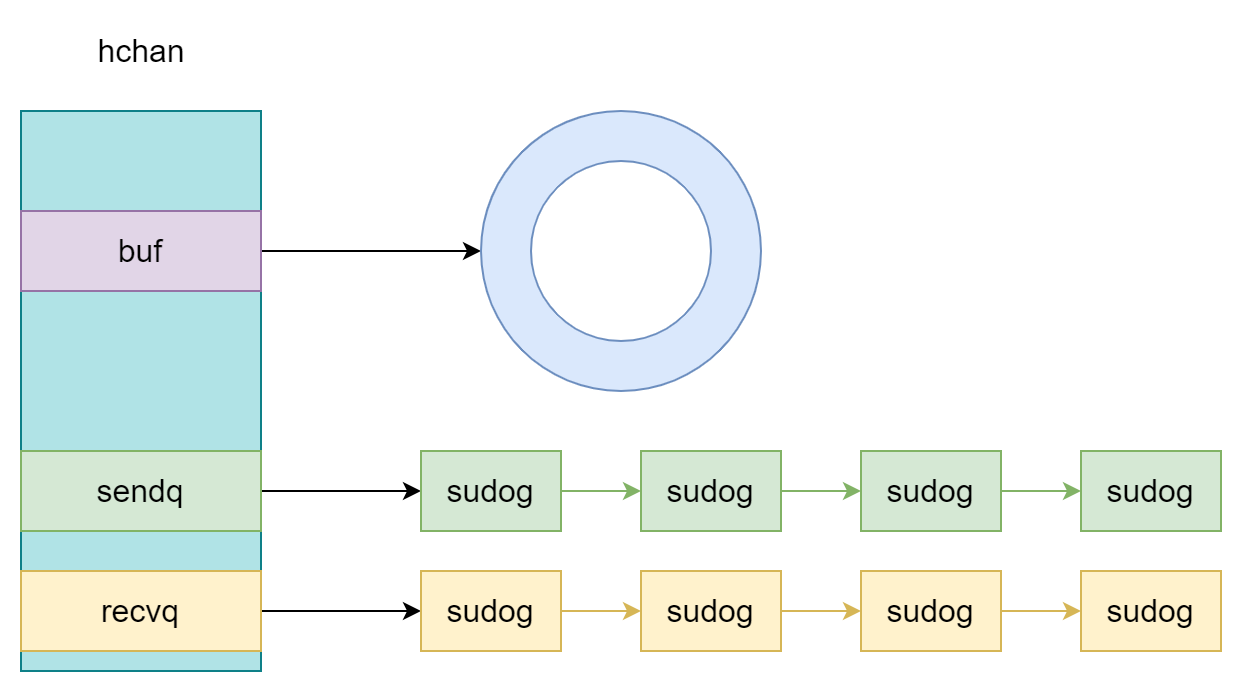

At runtime, channel is represented by the runtime.hchan struct, which contains the following fields:

type hchan struct {

qcount uint // total data in the queue

dataqsiz uint // size of the circular queue

buf unsafe.Pointer // points to an array of dataqsiz elements

elemsize uint16

closed uint32

elemtype *_type // element type

sendx uint // send index

recvx uint // receive index

recvq waitq // list of recv waiters

sendq waitq // list of send waiters

lock mutex

}As you can see from the lock field, channel is actually a locked synchronous circular queue. Other fields are explained below:

qcount: total number of data elementsdataqsiz: size of the circular queuebuf: pointer to an array of sizedataqsiz, which is the circular queueclosed: whether the channel is closedsendx,recvx: send and receive indicessendq,recvq: goroutine linked lists for sending and receiving, composed ofruntime.sudoggotype waitq struct { first *sudog last *sudog }

The structure of channel can be clearly understood from the figure below:

When using len and cap functions on a channel, it actually returns its hchan.qcount and hchan.dataqsiz fields.

Creation

Normally, there's only one way to create a channel, using the make function:

ch := make(chan int, size)The compiler translates this into a call to runtime.makechan, which is responsible for the actual channel creation. Its code is as follows:

func makechan(t *chantype, size int) *hchan {

elem := t.Elem

mem, overflow := math.MulUintptr(elem.Size_, uintptr(size))

var c *hchan

switch {

case mem == 0:

c = (*hchan)(mallocgc(hchanSize, nil, true))

c.buf = c.raceaddr()

case elem.PtrBytes == 0:

c = (*hchan)(mallocgc(hchanSize+mem, nil, true))

c.buf = add(unsafe.Pointer(c), hchanSize)

default:

c = new(hchan)

c.buf = mallocgc(mem, elem, true)

}

c.elemsize = uint16(elem.Size_)

c.elemtype = elem

c.dataqsiz = uint(size)

return c

}This logic is quite simple, mainly allocating memory for the channel. It first calculates the expected memory size based on the passed size and element type elem.Size_, then handles three cases:

sizeis 0, only allocatehchanSize- Element doesn't contain pointers, allocate corresponding memory size, and the circular queue memory is contiguous with the channel memory

- Element contains pointers, channel and circular queue memory are allocated separately

If the circular queue contains pointer elements, since they reference external elements, GC might scan these pointers during the mark-sweep phase. When storing non-pointer elements on contiguous memory, it avoids unnecessary scanning. After memory allocation, it updates other informational fields.

Incidentally, when channel capacity is a 64-bit integer, runtime.makechan64 function is used for creation. It's essentially a call to runtime.makechan, just with an additional type check:

func makechan64(t *chantype, size int64) *hchan {

if int64(int(size)) != size {

panic(plainError("makechan: size out of range"))

}

return makechan(t, int(size))

}Generally, size should not exceed math.MaxInt32.

Send

When sending data to a channel, we place the data to be sent on the right side of the arrow:

ch <- struct{}{}The compiler translates this into runtime.chansend1, and the function actually responsible for sending data is runtime.chansend. chansend1 passes the elem pointer, which points to the element being sent:

// entry point for c <- x from compiled code.

func chansend1(c *hchan, elem unsafe.Pointer) {

chansend(c, elem, true, getcallerpc())

}It first checks if the channel is nil. block indicates whether the current send operation is blocking (the value of block is related to select structure). If blocking send and channel is nil, it panics directly. In non-blocking send case, it directly checks if the channel is full without locking, and returns immediately if full:

func chansend(c *hchan, ep unsafe.Pointer, block bool, callerpc uintptr) bool {

if c == nil {

if !block {

return false

}

gopark(nil, nil, waitReasonChanSendNilChan, traceBlockForever, 2)

throw("unreachable")

}

if !block && c.closed == 0 && full(c) {

return false

}

...

}Then it acquires the lock and checks if the channel is closed. If already closed, it panics:

func chansend(c *hchan, ep unsafe.Pointer, block bool, callerpc uintptr) bool {

lock(&c.lock)

if c.closed != 0 {

unlock(&c.lock)

panic(plainError("send on closed channel"))

}

...

}After that, it dequeues a sudog from recvq queue, then sends via runtime.send function:

if sg := c.recvq.dequeue(); sg != nil {

send(c, sg, ep, func() { unlock(&c.lock) }, 3)

return true

}The send function content is as follows. It updates recvx and sendx, then uses runtime.memmove function to directly copy the communication data memory to the receiver goroutine's target element address, then uses runtime.goready function to make the receiver goroutine become _Grunnable state to rejoin scheduling:

func send(c *hchan, sg *sudog, ep unsafe.Pointer, unlockf func(), skip int) {

c.recvx++

if c.recvx == c.dataqsiz {

c.recvx = 0

}

c.sendx = c.recvx // c.sendx = (c.sendx+1) % c.dataqsiz

if sg.elem != nil {

sendDirect(c.elemtype, sg, ep)

sg.elem = nil

}

gp := sg.g

unlockf()

gp.param = unsafe.Pointer(sg)

sg.success = true

goready(gp, skip+1)

}

func sendDirect(t *_type, sg *sudog, src unsafe.Pointer) {

dst := sg.elem

memmove(dst, src, t.Size_)

}In this process, since a waiting receiver goroutine can be found, data is sent directly to the receiver without being stored in the circular queue. If no receiver is available and capacity is sufficient, it's placed in the circular queue buffer and returns directly:

func chansend(c *hchan, ep unsafe.Pointer, block bool, callerpc uintptr) bool {

...

if c.qcount < c.dataqsiz {

qp := chanbuf(c, c.sendx)

typedmemmove(c.elemtype, qp, ep)

c.sendx++

if c.sendx == c.dataqsiz {

c.sendx = 0

}

c.qcount++

unlock(&c.lock)

return true

}

...

}If the buffer is full, in non-blocking send case it returns directly:

if !block {

unlock(&c.lock)

return false

}If blocking send, it enters the following code flow:

func chansend(c *hchan, ep unsafe.Pointer, block bool, callerpc uintptr) bool {

...

gp := getg()

mysg := acquireSudog()

mysg.releasetime = 0

mysg.elem = ep

mysg.waitlink = nil

mysg.g = gp

mysg.isSelect = false

mysg.c = c

gp.waiting = mysg

gp.param = nil

c.sendq.enqueue(mysg)

gp.parkingOnChan.Store(true)

gopark(chanparkcommit, unsafe.Pointer(&c.lock), waitReasonChanSend, traceBlockChanSend, 2)

KeepAlive(ep)

...

}First, it constructs the current goroutine as sudog and adds it to hchan.sendq waiting send goroutine queue, then uses runtime.gopark to block the current goroutine, changing it to _Gwaiting state until awakened by the receiver. It also uses runtime.KeepAlive to keep the data being sent alive to ensure the receiver successfully copies it. When awakened, it enters the following cleanup flow:

func chansend(c *hchan, ep unsafe.Pointer, block bool, callerpc uintptr) bool {

...

gp.waiting = nil

gp.activeStackChans = false

closed := !mysg.success

gp.param = nil

mysg.c = nil

if closed {

if c.closed == 0 {

throw("chansend: spurious wakeup")

}

panic(plainError("send on closed channel"))

}

releaseSudog(mysg)

return true

}As you can see, for channel send operations, there are the following cases:

- Channel is

nil, program panics - Channel is closed, panic occurs

recvqqueue is not empty, send directly to receiver- No goroutine waiting, add to buffer

- Buffer is full, send goroutine enters blocked state, waiting for other goroutines to receive data

It's worth noting that in the send logic above, we didn't see handling for overflow buffer data. This data cannot be discarded; it's saved in sudog.elem and handled by the receiver.

Receive

In Go, there are two syntaxes for receiving data from a channel. The first is reading data only:

data <- chThe second is checking whether data was read successfully:

data, ok <- chThe above two syntaxes are translated by the compiler into calls to runtime.chanrecv1 and runtime.chanrecv2, but they're actually calls to runtime.chanrecv. The beginning of receive logic is similar to send logic, both first check if channel is nil:

func chanrecv(c *hchan, ep unsafe.Pointer, block bool) (selected, received bool) {

if c == nil {

if !block {

return

}

gopark(nil, nil, waitReasonChanReceiveNilChan, traceBlockForever, 2)

throw("unreachable")

}

...

}Then in non-blocking read case, without locking it checks if channel is empty. If channel is not closed, return directly. If channel is closed, clear the receive element memory:

func chanrecv(c *hchan, ep unsafe.Pointer, block bool) (selected, received bool) {

...

if !block && empty(c) {

if atomic.Load(&c.closed) == 0 {

return

}

if empty(c) {

if ep != nil {

typedmemclr(c.elemtype, ep)

}

return true, false

}

}

...

}Then it locks and accesses hchan.sendq queue. From the if c.closed != 0 branch below, you can see that even if the channel is closed, if there are still elements in the channel, it doesn't return directly but continues to execute element consumption code. This is why reading is still allowed after channel is closed:

func chanrecv(c *hchan, ep unsafe.Pointer, block bool) (selected, received bool) {

...

if c.closed != 0 {

if c.qcount == 0 {

unlock(&c.lock)

if ep != nil {

typedmemclr(c.elemtype, ep)

}

return true, false

}

} else {

if sg := c.sendq.dequeue(); sg != nil {

recv(c, sg, ep, func() { unlock(&c.lock) }, 3)

return true, true

}

}

...

}If the channel is not closed, it checks if there are goroutines waiting to send in sendq queue. If so, runtime.recv handles the sender goroutine:

func recv(c *hchan, sg *sudog, ep unsafe.Pointer, unlockf func(), skip int) {

if c.dataqsiz == 0 {

if ep != nil {

recvDirect(c.elemtype, sg, ep)

}

} else {

qp := chanbuf(c, c.recvx)

// copy data from queue to receiver

if ep != nil {

typedmemmove(c.elemtype, ep, qp)

}

// copy data from sender to queue

typedmemmove(c.elemtype, qp, sg.elem)

c.recvx++

if c.recvx == c.dataqsiz {

c.recvx = 0

}

c.sendx = c.recvx // c.sendx = (c.sendx+1) % c.dataqsiz

}

sg.elem = nil

gp := sg.g

unlockf()

gp.param = unsafe.Pointer(sg)

sg.success = true

goready(gp, skip+1)

}First case: channel capacity is 0 (unbuffered channel), receiver directly copies data from sender via runtime.recvDirect function. Second case: buffer is full. Although we didn't see logic to check if buffer is full, when buffer capacity is not 0 and there's a sender waiting to send, it means the buffer is already full, because only when buffer is full will sender block waiting to send. This logic is judged by the sender. Then receiver dequeues the head element from buffer and copies its memory to target receive element pointer, then copies and enqueues the sender goroutine's data to be sent (here we see how receiver handles overflow buffer data). Finally, runtime.goready wakes up the sender goroutine, making it _Grunnable state to rejoin scheduling.

If no goroutine is waiting to send, it checks if buffer has elements waiting to be consumed, dequeues the head element and copies its memory to receiver target element, then returns:

func chanrecv(c *hchan, ep unsafe.Pointer, block bool) (selected, received bool) {

...

if c.qcount > 0 {

// Receive directly from queue

qp := chanbuf(c, c.recvx)

if ep != nil {

typedmemmove(c.elemtype, ep, qp)

}

typedmemclr(c.elemtype, qp)

c.recvx++

if c.recvx == c.dataqsiz {

c.recvx = 0

}

c.qcount--

unlock(&c.lock)

return true, true

}

...

}Finally, if there are no consumable elements in the channel, runtime.gopark changes current goroutine to _Gwaiting state, blocking and waiting until awakened by sender goroutine:

func chanrecv(c *hchan, ep unsafe.Pointer, block bool) (selected, received bool) {

...

gp := getg()

mysg := acquireSudog()

mysg.elem = ep

mysg.waitlink = nil

gp.waiting = mysg

mysg.g = gp

mysg.isSelect = false

mysg.c = c

gp.param = nil

c.recvq.enqueue(mysg)

gp.parkingOnChan.Store(true)

gopark(chanparkcommit, unsafe.Pointer(&c.lock), waitReasonChanReceive, traceBlockChanRecv, 2)

...

}After being awakened, it returns. At this point, the returned success value comes from sudog.success. If sender successfully sent data, this field should be set to true by sender, which we can see in runtime.send function:

func send(c *hchan, sg *sudog, ep unsafe.Pointer, unlockf func(), skip int) {

...

sg.success = true

goready(gp, skip+1)

}

func chanrecv(c *hchan, ep unsafe.Pointer, block bool) (selected, received bool) {

...

gp.waiting = nil

gp.activeStackChans = false

success := mysg.success

gp.param = nil

mysg.c = nil

releaseSudog(mysg)

return true, success

}Correspondingly, in sender runtime.chansend end, the sudog.success judgment comes from receiver's setting in runtime.recv function. Through these, we can discover that receiver and sender complement each other to make channel work properly. Overall, receive data is slightly more complex than send data, with the following cases:

- Channel is

nil, program panics - Channel is closed, if channel is empty return directly, if not empty jump to case 5

- Buffer capacity is 0,

sendqhas waiting sender goroutine, directly copy sender's data, then wake up sender - Buffer is full,

sendqhas waiting sender goroutine, dequeue buffer head element, enqueue sender's data, then wake up sender - Buffer not full and count not 0, dequeue buffer head element, then return

- Buffer is empty, enter blocked state, waiting to be awakened by sender

Close

For closing a channel, we use the built-in function close:

close(ch)The compiler translates this into a call to runtime.closechan. If the passed channel is nil or already closed, it panics directly:

func closechan(c *hchan) {

if c == nil {

panic(plainError("close of nil channel"))

}

lock(&c.lock)

if c.closed != 0 {

unlock(&c.lock)

panic(plainError("close of closed channel"))

}

c.closed = 1

...

}Then it dequeues all blocked goroutines from this channel's sendq and recvq and wakes them all up via runtime.goready:

func closechan(c *hchan) {

...

var glist gList

// release all readers

for {

sg := c.recvq.dequeue()

gp := sg.g

sg.success = false

glist.push(gp)

}

// release all writers (they will panic)

for {

sg := c.sendq.dequeue()

gp := sg.g

sg.success = false

glist.push(gp)

}

// Ready all Gs now that we've dropped the channel lock.

for !glist.empty() {

gp := glist.pop()

gp.schedlink = 0

goready(gp, 3)

}

}TIP

Incidentally, Go allows unidirectional channels, with the following rules:

- Read-only channel cannot send data

- Read-only channel cannot be closed

- Write-only channel cannot read data

These errors are found during compile-time type checking, not left to runtime. If interested, you can read related code in these two packages:

cmd/compile/internal/types2cmd/compile/internal/typecheck

// cmd/compile/internal/types2/stmt.go: 425

case *syntax.SendStmt:

...

if uch.dir == RecvOnly {

check.errorf(s, InvalidSend, invalidOp+"cannot send to receive-only channel %s", &ch)

return

}

check.assignment(&val, uch.elem, "send")Check if Closed

In early days (before go1), there was a built-in function closed to check if a channel is closed, but it was quickly removed. This is because channels are typically used in multi-goroutine scenarios. If it returns true, it indeed means the channel is closed. But if it returns false, it doesn't mean the channel is really not closed, because no one knows who will close the channel next moment. So this return value is unreliable. Using this return value as basis to decide whether to send data to channel is even more dangerous, because sending to a closed channel causes panic.

If really needed, you can implement your own. One approach is to check by writing to channel, as shown below:

func closed(ch chan int) (ans bool) {

defer func() {

if err := recover(); err != nil {

ans = true

}

}()

ch <- 0

return ans

}However, this also has side effects. If not closed, it writes redundant data, and enters defer-recover handling process, causing additional performance loss. So the write approach can be directly abandoned. For read approach, it can be considered, but cannot read channel directly, because directly reading with block parameter value true will block the goroutine. It should be used with select. When channel is combined with select, it's non-blocking:

func closed(ch chan int) bool {

select {

case _, received := <-ch:

return !received

default:

return false

}

}This just looks slightly better than above. It only applies when channel is closed and there are no elements in channel buffer. If there are elements, it will unnecessarily consume that element. Still no good implementation.

But actually, we don't need to check if channel is closed at all. The reason was already explained at the beginning: the return value is unreliable. Correctly using channel and correctly closing channel is what we should do. So:

- Never close channel on receiver side. The fact that closing read-only channel doesn't compile already tells you not to do this. Let sender do it.

- If there are multiple senders, let a separate goroutine complete the close operation, ensuring

closeis called by only one party and only called once. - When passing channel, best to restrict to read-only or write-only

Following these principles ensures no major problems.