memory

Unlike traditional C/C++, Go is a GC language. In most cases, memory allocation and deallocation are automatically managed by Go. The compiler decides whether an object's memory should be allocated on the stack or heap, basically without user participation in memory management. Users only need to use memory. In Go, heap memory management mainly has two large components: the memory allocator is responsible for heap memory allocation, and the garbage collector is responsible for recycling and releasing useless heap memory. This article mainly discusses how the memory allocator works. Go's memory allocator is largely influenced by Google's TCMalloc memory allocator.

Allocators

In Go, there are two types of memory allocators: one is the linear allocator, and the other is the linked allocator.

Linear Allocation

The linear allocator corresponds to the runtime.linearAlloc structure, as shown below:

type linearAlloc struct {

next uintptr // next free byte

mapped uintptr // one byte past end of mapped space

end uintptr // end of reserved space

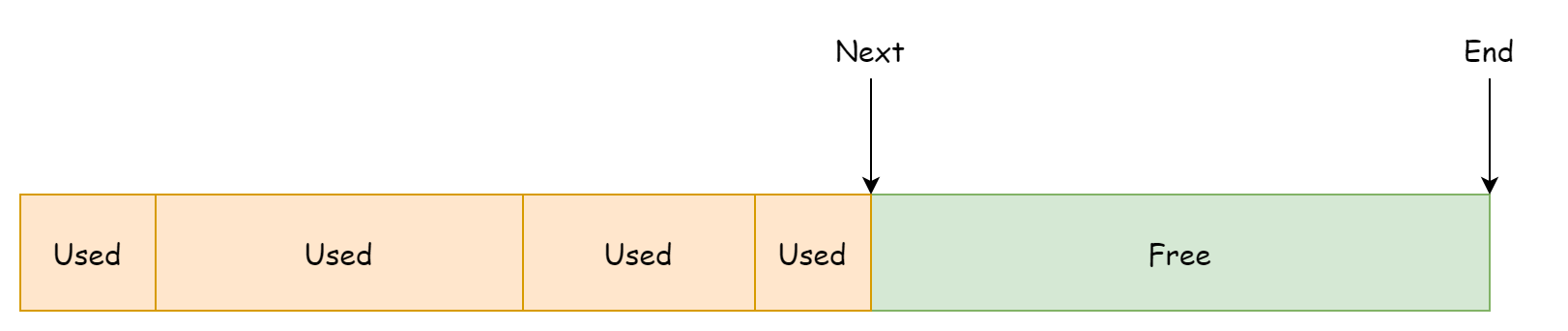

mapMemory bool // transition memory from Reserved to Ready if true

}This allocator pre-allocates a contiguous memory space from the operating system. next points to the usable memory address, and end points to the end address of the memory space, which can be understood as shown in the figure below.

The linear allocator's memory allocation method is very easy to understand. It checks whether there's enough remaining space to accommodate based on the requested memory size. If enough, it updates the next field and returns the starting address of the remaining space. The code is as follows:

func (l *linearAlloc) alloc(size, align uintptr, sysStat *sysMemStat) unsafe.Pointer {

p := alignUp(l.next, align)

if p+size > l.end {

return nil

}

l.next = p + size

return unsafe.Pointer(p)

}The advantage of this allocation method is that it's fast and simple. The disadvantage is also quite obvious: it cannot reuse released memory because the next field only points to the remaining space memory address. It cannot perceive previously used and released memory space, which causes a lot of memory space waste, as shown in the figure below.

So linear allocation is not the main allocation method in Go. It's only used as a memory pre-allocation function on 32-bit machines.

Linked Allocation

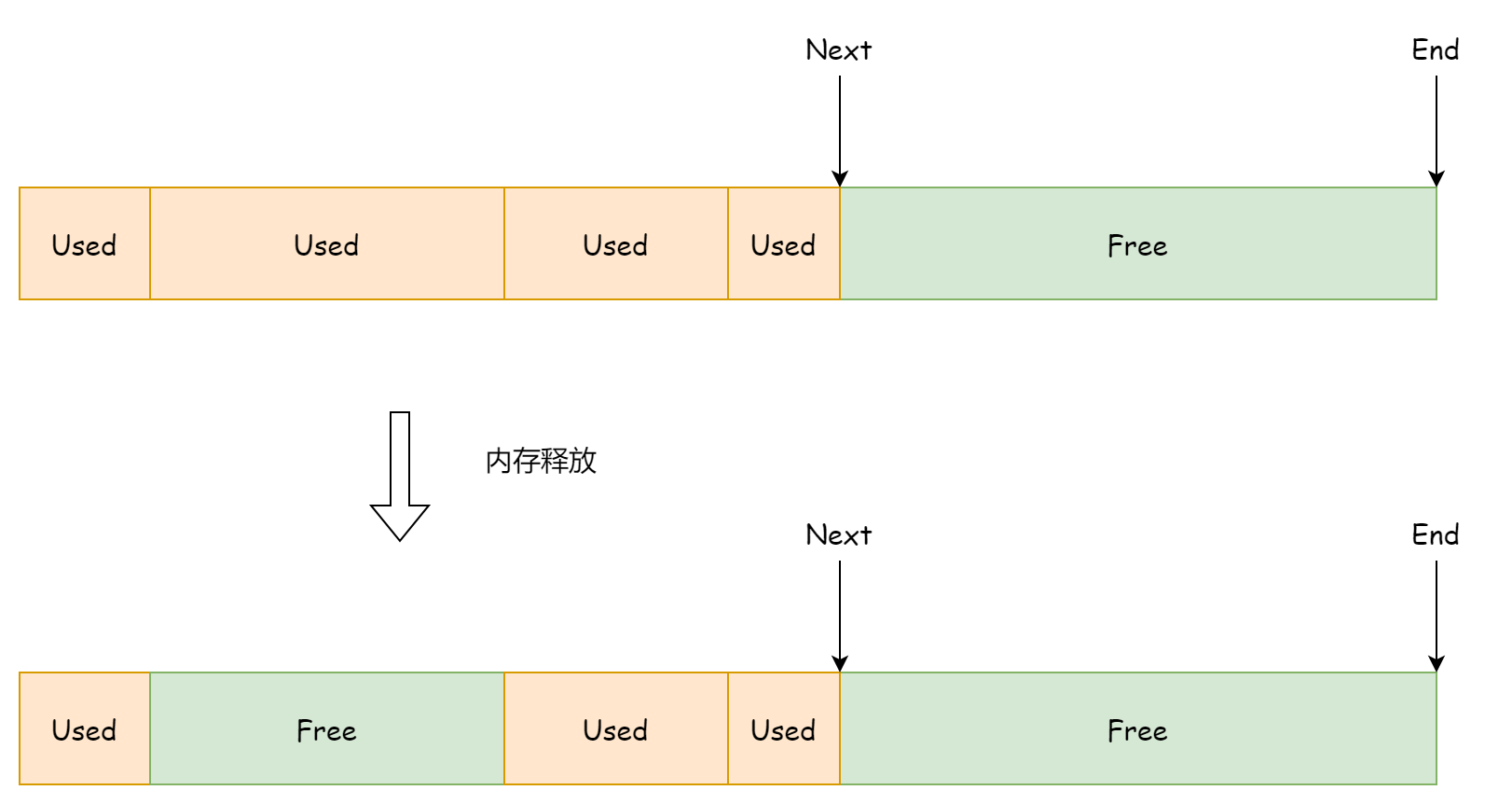

The linked allocator corresponds to the runtime.fixalloc structure. Memory allocated by the linked allocator is not contiguous and exists as a singly linked list. The linked allocator consists of several fixed-size memory blocks, and each memory block consists of several fixed-size memory chunks. Each time memory is allocated, a fixed-size memory chunk is used.

type fixalloc struct {

size uintptr

first func(arg, p unsafe.Pointer) // called first time p is returned

arg unsafe.Pointer

list *mlink

chunk uintptr // use uintptr instead of unsafe.Pointer to avoid write barriers

nchunk uint32 // bytes remaining in current chunk

nalloc uint32 // size of new chunks in bytes

inuse uintptr // in-use bytes now

stat *sysMemStat

zero bool // zero allocations

}

type mlink struct {

_ sys.NotInHeap

next *mlink

}Its fields are not as simple and easy to understand as the linear allocator. Here's a brief introduction to the important ones:

size: How much memory is used each time memory is allocated.list: Points to the head node of reusable memory chunks. Each memory chunk size is determined bysize.chunk: Points to the idle address in the currently used memory block.nchunk: Remaining available bytes in the current memory block.nalloc: Memory block size, fixed at 16KB.inuse: Total bytes of memory currently in use.zero: Whether to clear memory when reusing memory blocks.

The linked allocator holds references to the current memory block and reusable memory chunks. Each memory block size is fixed at 16KB, which is set during initialization.

const _FixAllocChunk = 16 << 10

func (f *fixalloc) init(size uintptr, first func(arg, p unsafe.Pointer), arg unsafe.Pointer, stat *sysMemStat) {

if size > _FixAllocChunk {

throw("runtime: fixalloc size too large")

}

if min := unsafe.Sizeof(mlink{}); size < min {

size = min

}

f.size = size

f.first = first

f.arg = arg

f.list = nil

f.chunk = 0

f.nchunk = 0

f.nalloc = uint32(_FixAllocChunk / size * size)

f.inuse = 0

f.stat = stat

f.zero = true

}The distribution of memory blocks is shown in the figure below. Memory blocks in the figure are arranged according to creation time. In reality, their addresses are not contiguous.

The memory size allocated by the linked allocator each time is also fixed, determined by fixalloc.size. During allocation, it first checks whether there are reusable memory blocks. If so, it prioritizes using reusable memory blocks, then uses the current memory block. If the current memory block's remaining space is insufficient to accommodate, it creates a new memory block. This part of the logic corresponds to the following code:

func (f *fixalloc) alloc() unsafe.Pointer {

if f.size == 0 {

print("runtime: use of FixAlloc_Alloc before FixAlloc_Init\n")

throw("runtime: internal error")

}

if f.list != nil {

v := unsafe.Pointer(f.list)

f.list = f.list.next

f.inuse += f.size

if f.zero {

memclrNoHeapPointers(v, f.size)

}

return v

}

if uintptr(f.nchunk) < f.size {

f.chunk = uintptr(persistentalloc(uintptr(f.nalloc), 0, f.stat))

f.nchunk = f.nalloc

}

v := unsafe.Pointer(f.chunk)

if f.first != nil {

f.first(f.arg, v)

}

f.chunk = f.chunk + f.size

f.nchunk -= uint32(f.size)

f.inuse += f.size

return v

}The advantage of the linked allocator is precisely that it can reuse released memory. The basic unit for reusing memory is a fixed-size memory chunk, whose size is determined by fixalloc.size. When releasing memory, the linked allocator adds the memory chunk as the head node to the idle memory chunk linked list. The code is as follows:

func (f *fixalloc) free(p unsafe.Pointer) {

f.inuse -= f.size

v := (*mlink)(p)

v.next = f.list

f.list = v

}Memory Components

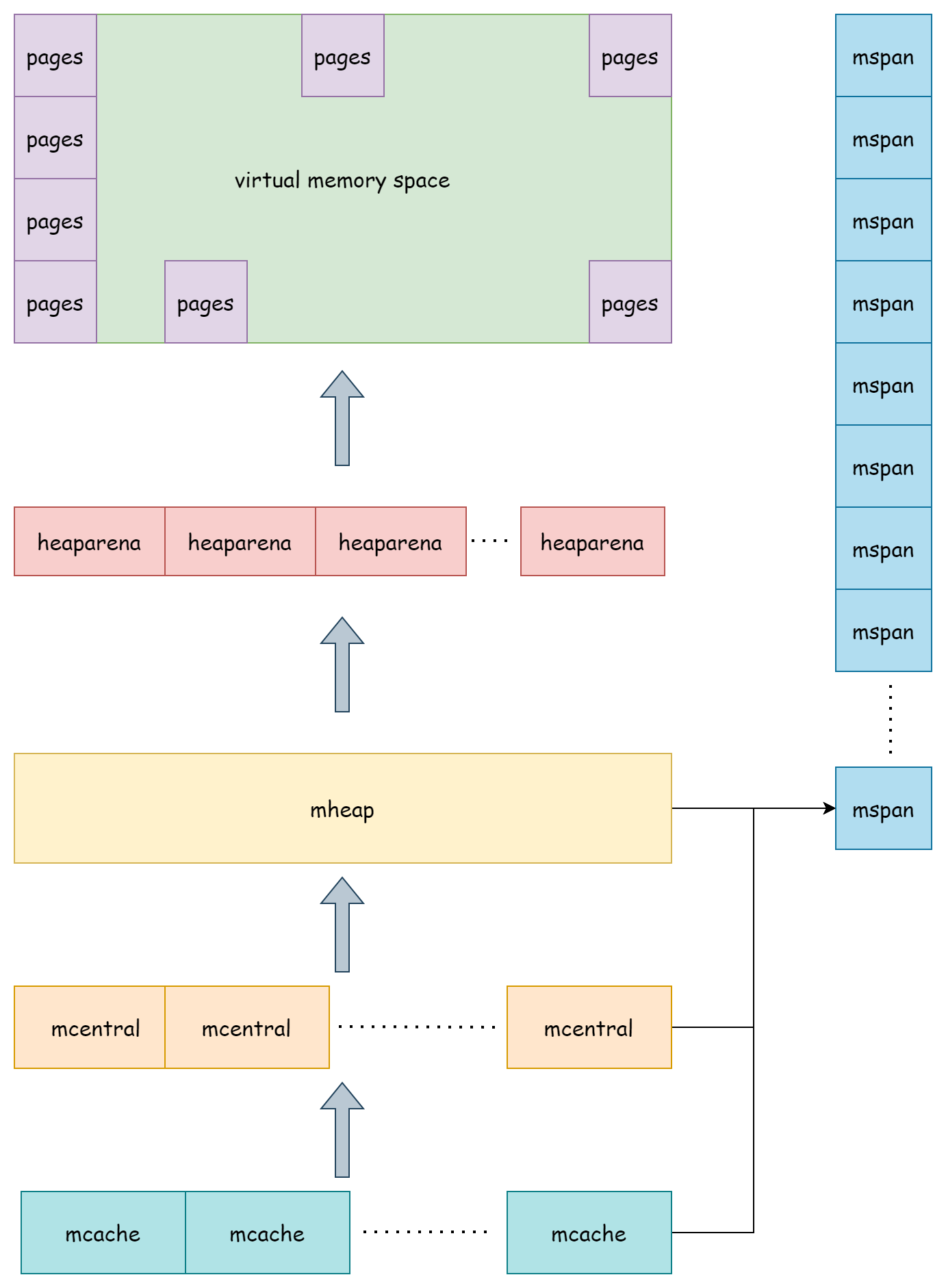

Go's memory allocator is mainly composed of the following components: mspan, heaparena, mcache, mcentral, and mheap. They work layer by layer to manage Go's heap memory.

mspan

runtime.mspan is the basic unit in Go memory allocation. Its structure is as follows:

type mspan struct {

next *mspan // next span in list, or nil if none

prev *mspan // previous span in list, or nil if none

startAddr uintptr // address of first byte of span aka s.base()

npages uintptr // number of pages in span

freeindex uintptr

spanclass spanClass // size class and noscan (uint8)

needzero uint8 // needs to be zeroed before allocation

elemsize uintptr // computed from sizeclass or from npages

limit uintptr // end of data in span

state mSpanStateBox // mSpanInUse etc; accessed atomically (get/set methods)

nelems uintptr // number of object in the span.

allocCache uint64

allocCount uint16 // number of allocated objects

...

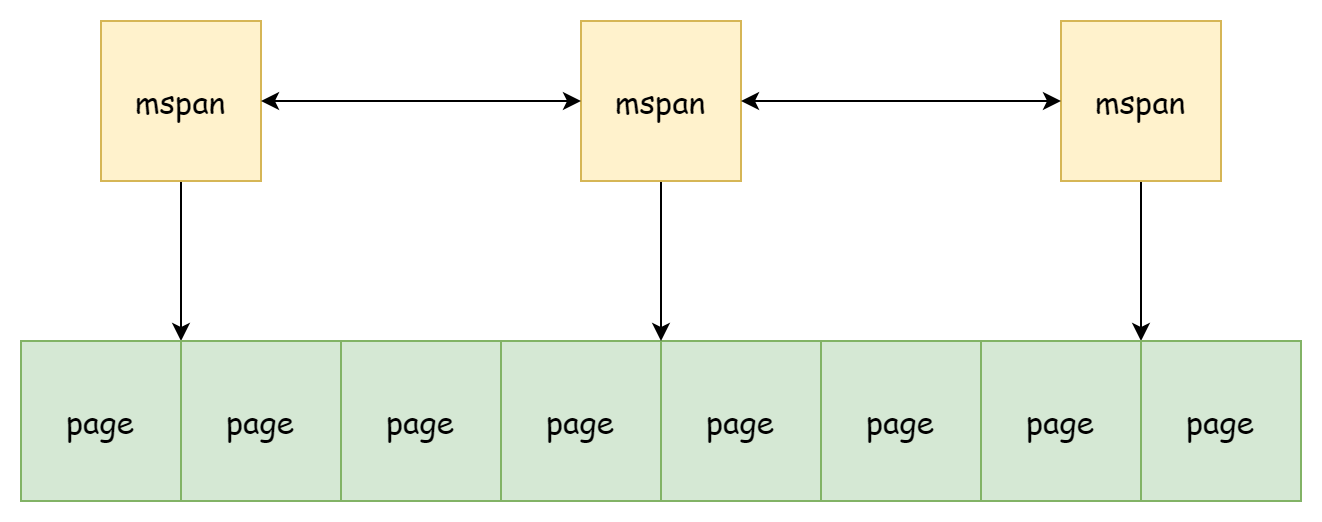

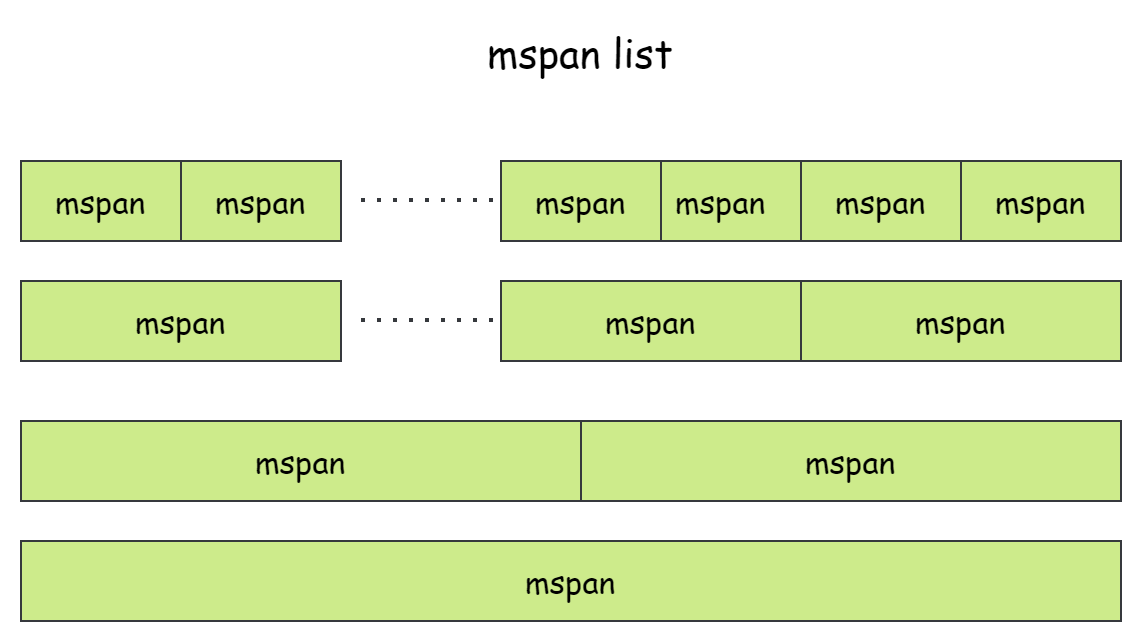

}mspan and mspan are linked via next and prev as a doubly linked list. Memory addresses are not contiguous. Each mspan manages mspan.npages pages of runtime.pageSize size memory. Usually, the page size is 8KB. mspan.startAddr records the starting address of these pages, and mspan.limit records the end address of used memory. Each mspan stores elements of fixed size elemsize, so the number of elements it can hold is also fixed. Due to the fixed quantity, objects are distributed in mspan like an array, in the range [0, nelems]. freeindex records the index of the next available slot for storing objects. mspan has three states:

mSpanDead: Memory has been released.mSpanInUse: Allocated to the heap.mSpanManual: Allocated to manually managed memory parts, such as the stack.

Determining mspan element size is spanClass. spanClass itself is a uint8 type integer. The high seven bits store the class value 0-67, and the last bit is used to indicate noscan, i.e., whether it contains pointers.

type spanClass uint8

func (sc spanClass) sizeclass() int8 {

return int8(sc >> 1)

}

func (sc spanClass) noscan() bool {

return sc&1 != 0

}It has a total of 68 different values. All values are stored in table form in the runtime.sizeclasses.go file. At runtime, using spanClass through runtime.class_to_size can obtain mspan object size, and through class_to_allocnpages can obtain mspan page count.

| class | max object size | span size | object count | tail waste | max memory waste rate | min alignment |

|---|---|---|---|---|---|---|

| 1 | 8 | 8192 | 1024 | 0 | 87.50% | 8 |

| 2 | 16 | 8192 | 512 | 0 | 43.75% | 16 |

| 3 | 24 | 8192 | 341 | 8 | 29.24% | 8 |

| 4 | 32 | 8192 | 256 | 0 | 21.88% | 32 |

| 5 | 48 | 8192 | 170 | 32 | 31.52% | 16 |

| 6 | 64 | 8192 | 128 | 0 | 23.44% | 64 |

| 7 | 80 | 8192 | 102 | 32 | 19.07% | 16 |

| 8 | 96 | 8192 | 85 | 32 | 15.95% | 32 |

| 9 | 112 | 8192 | 73 | 16 | 13.56% | 16 |

| 10 | 128 | 8192 | 64 | 0 | 11.72% | 128 |

| 11 | 144 | 8192 | 56 | 128 | 11.82% | 16 |

| 12 | 160 | 8192 | 51 | 32 | 9.73% | 32 |

| 13 | 176 | 8192 | 46 | 96 | 9.59% | 16 |

| 14 | 192 | 8192 | 42 | 128 | 9.25% | 64 |

| 15 | 208 | 8192 | 39 | 80 | 8.12% | 16 |

| 16 | 224 | 8192 | 36 | 128 | 8.15% | 32 |

| 17 | 240 | 8192 | 34 | 32 | 6.62% | 16 |

| 18 | 256 | 8192 | 32 | 0 | 5.86% | 256 |

| 19 | 288 | 8192 | 28 | 128 | 12.16% | 32 |

| 20 | 320 | 8192 | 25 | 192 | 11.80% | 64 |

| 21 | 352 | 8192 | 23 | 96 | 9.88% | 32 |

| 22 | 384 | 8192 | 21 | 128 | 9.51% | 128 |

| 23 | 416 | 8192 | 19 | 288 | 10.71% | 32 |

| 24 | 448 | 8192 | 18 | 128 | 8.37% | 64 |

| 25 | 480 | 8192 | 17 | 32 | 6.82% | 32 |

| 26 | 512 | 8192 | 16 | 0 | 6.05% | 512 |

| 27 | 576 | 8192 | 14 | 128 | 12.33% | 64 |

| 28 | 640 | 8192 | 12 | 512 | 15.48% | 128 |

| 29 | 704 | 8192 | 11 | 448 | 13.93% | 64 |

| 30 | 768 | 8192 | 10 | 512 | 13.94% | 256 |

| 31 | 896 | 8192 | 9 | 128 | 15.52% | 128 |

| 32 | 1024 | 8192 | 8 | 0 | 12.40% | 1024 |

| 33 | 1152 | 8192 | 7 | 128 | 12.41% | 128 |

| 34 | 1280 | 8192 | 6 | 512 | 15.55% | 256 |

| 35 | 1408 | 16384 | 11 | 896 | 14.00% | 128 |

| 36 | 1536 | 8192 | 5 | 512 | 14.00% | 512 |

| 37 | 1792 | 16384 | 9 | 256 | 15.57% | 256 |

| 38 | 2048 | 8192 | 4 | 0 | 12.45% | 2048 |

| 39 | 2304 | 16384 | 7 | 256 | 12.46% | 256 |

| 40 | 2688 | 8192 | 3 | 128 | 15.59% | 128 |

| 41 | 3072 | 24576 | 8 | 0 | 12.47% | 1024 |

| 42 | 3200 | 16384 | 5 | 384 | 6.22% | 128 |

| 43 | 3456 | 24576 | 7 | 384 | 8.83% | 128 |

| 44 | 4096 | 8192 | 2 | 0 | 15.60% | 4096 |

| 45 | 4864 | 24576 | 5 | 256 | 16.65% | 256 |

| 46 | 5376 | 16384 | 3 | 256 | 10.92% | 256 |

| 47 | 6144 | 24576 | 4 | 0 | 12.48% | 2048 |

| 48 | 6528 | 32768 | 5 | 128 | 6.23% | 128 |

| 49 | 6784 | 40960 | 6 | 256 | 4.36% | 128 |

| 50 | 6912 | 49152 | 7 | 768 | 3.37% | 256 |

| 51 | 8192 | 8192 | 1 | 0 | 15.61% | 8192 |

| 52 | 9472 | 57344 | 6 | 512 | 14.28% | 256 |

| 53 | 9728 | 49152 | 5 | 512 | 3.64% | 512 |

| 54 | 10240 | 40960 | 4 | 0 | 4.99% | 2048 |

| 55 | 10880 | 32768 | 3 | 128 | 6.24% | 128 |

| 56 | 12288 | 24576 | 2 | 0 | 11.45% | 4096 |

| 57 | 13568 | 40960 | 3 | 256 | 9.99% | 256 |

| 58 | 14336 | 57344 | 4 | 0 | 5.35% | 2048 |

| 59 | 16384 | 16384 | 1 | 0 | 12.49% | 8192 |

| 60 | 18432 | 73728 | 4 | 0 | 11.11% | 2048 |

| 61 | 19072 | 57344 | 3 | 128 | 3.57% | 128 |

| 62 | 20480 | 40960 | 2 | 0 | 6.87% | 4096 |

| 63 | 21760 | 65536 | 3 | 256 | 6.25% | 256 |

| 64 | 24576 | 24576 | 1 | 0 | 11.45% | 8192 |

| 65 | 27264 | 81920 | 3 | 128 | 10.00% | 128 |

| 66 | 28672 | 57344 | 2 | 0 | 4.91% | 4096 |

| 67 | 32768 | 32768 | 1 | 0 | 12.50% | 8192 |

The calculation logic for these values can be found in the printComment function of runtime.mksizeclasses.go. The maximum memory waste rate formula is:

float64((size-prevSize-1)*objects+tailWaste) / float64(spanSize)For example, when class is 2, its maximum memory waste rate is:

((16-8-1)*512+0)/8192 = 0.4375When class value is 0, it's the spanClass used for allocating large objects above 32KB. Basically, one large object occupies one mspan. So Go's heap memory is actually composed of several mspan linked lists of different fixed sizes.

heaparena

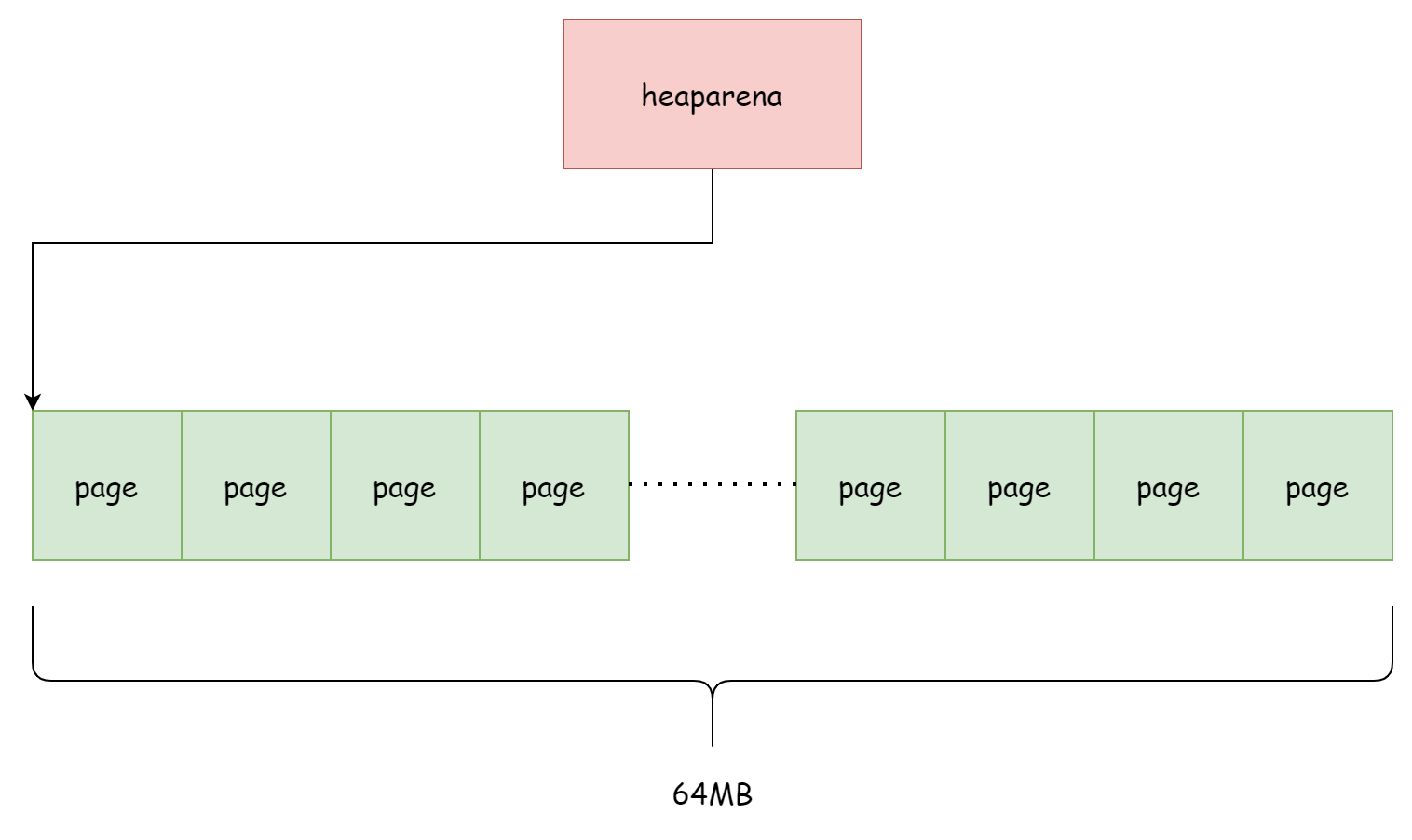

As mentioned earlier, mspan consists of several pages, but mspan only holds references to page addresses and is not responsible for managing these pages. The one truly responsible for managing these page memories is runtime.heaparena. Each heaparena manages several pages. heaparena size is determined by runtime.heapArenaBytes, usually 64MB. bitmap is used to identify whether the corresponding address in the page stores objects. zeroedBase is the starting address of the page memory managed by this heaparena. spans records which mspan uses each page.

type heapArena struct {

_ sys.NotInHeap

bitmap [heapArenaBitmapWords]uintptr

noMorePtrs [heapArenaBitmapWords / 8]uint8

spans [pagesPerArena]*mspan

pageInUse [pagesPerArena / 8]uint8

pageMarks [pagesPerArena / 8]uint8

pageSpecials [pagesPerArena / 8]uint8

checkmarks *checkmarksMap

zeroedBase uintptr

}The logic about pages and mspan records can be found in the mheap.setSpans method, as follows:

func (h *mheap) setSpans(base, npage uintptr, s *mspan) {

p := base / pageSize

ai := arenaIndex(base)

ha := h.arenas[ai.l1()][ai.l2()]

for n := uintptr(0); n < npage; n++ {

i := (p + n) % pagesPerArena

if i == 0 {

ai = arenaIndex(base + n*pageSize)

ha = h.arenas[ai.l1()][ai.l2()]

}

ha.spans[i] = s

}

}In Go heap, all page memory is managed by a two-dimensional heaparena array. See the mheap.arenas field:

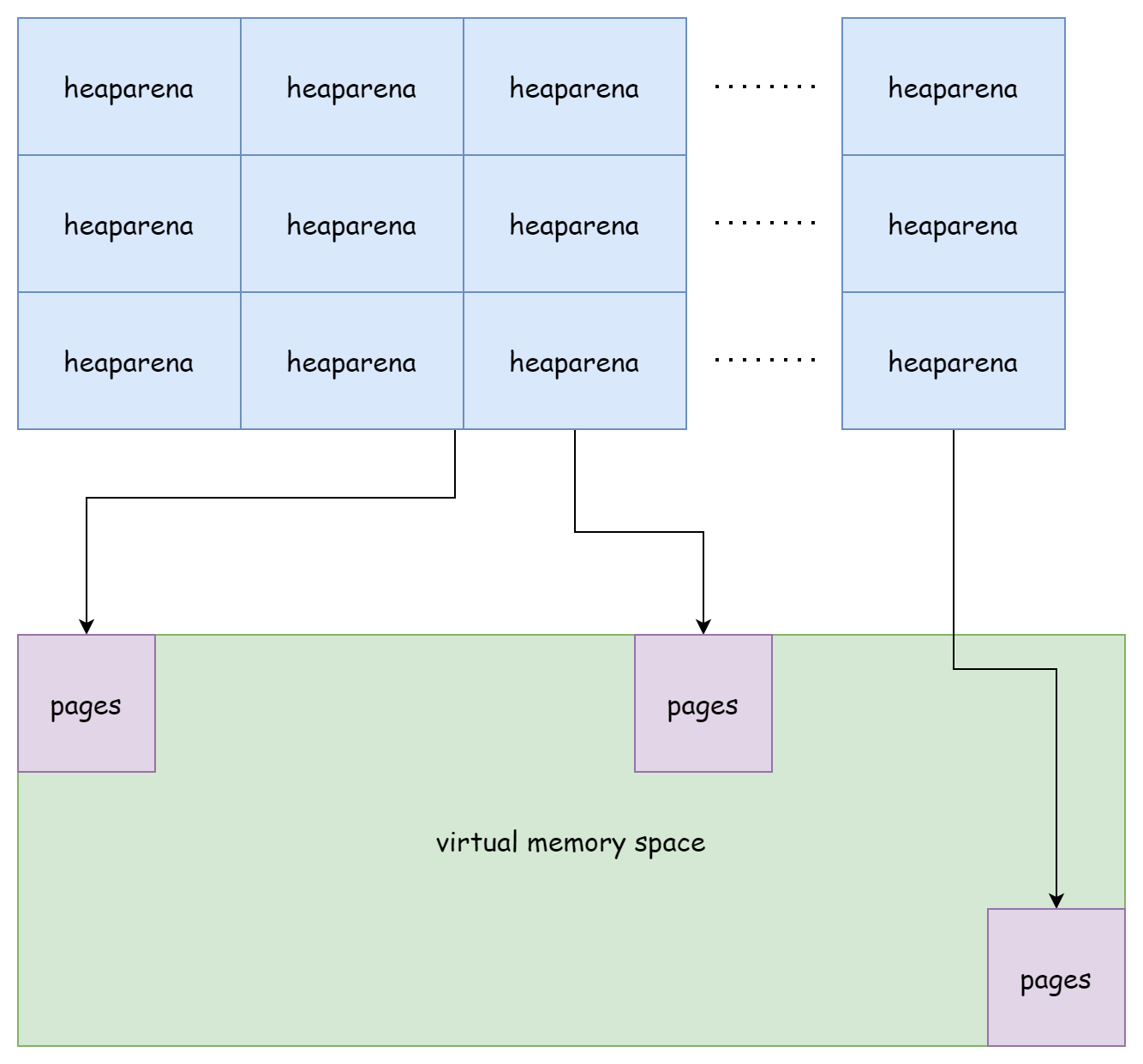

type mheap struct {

arenas [1 << arenaL1Bits]*[1 << arenaL2Bits]*heapArena

}On 64-bit Windows platforms, the array's first dimension is 1 << 6, and the second dimension is 1 << 16. On 64-bit Linux platforms, the first dimension is 1, and the second dimension is 1 << 22. This two-dimensional array composed of all heaparena forms Go runtime's virtual memory space. Overall, it looks like the figure below.

Although heaparena are adjacent, the page memories they manage are not contiguous.

mcache

mcache corresponds to the runtime.mcache structure, which has appeared in the concurrent scheduling article. Although its name is mcache, it's actually bound to processor P. mcache is the memory cache on each processor P, containing the mspan linked list array alloc. The array size is fixed at 136, which is exactly twice the number of spanClass. There's also the tiny object cache tiny, where tiny points to the starting address of tiny object memory, tinyoffset is the idle memory offset relative to the starting address, and tinyAllocs indicates how many tiny objects have been allocated. For stack cache stackcache, you can learn about it in Stack Memory Allocation.

type mcache struct {

_ sys.NotInHeap

nextSample uintptr // trigger heap sample after allocating this many bytes

scanAlloc uintptr // bytes of scannable heap allocated

tiny uintptr

tinyoffset uintptr

tinyAllocs uintptr

alloc [numSpanClasses]*mspan

stackcache [_NumStackOrders]stackfreelist

flushGen atomic.Uint32

}During initialization, the linked lists in mcache's alloc only contain an empty head node runtime.emptymspan, which is an mspan with no available memory.

func allocmcache() *mcache {

var c *mcache

systemstack(func() {

lock(&mheap_.lock)

c = (*mcache)(mheap_.cachealloc.alloc())

c.flushGen.Store(mheap_.sweepgen)

unlock(&mheap_.lock)

})

for i := range c.alloc {

c.alloc[i] = &emptymspan

}

c.nextSample = nextSample()

return c

}Only when memory allocation is needed will it apply for a new mspan from mcentral to replace the original empty span. This work is completed by the mcache.refill method. Its only call entry is the runtime.mallocgc function. Below is the simplified code:

func (c *mcache) refill(spc spanClass) {

// Return the current cached span to the central lists.

s := c.alloc[spc]

// Get a new cached span from the central lists.

s = mheap_.central[spc].mcentral.cacheSpan()

if s == nil {

throw("out of memory")

}

c.scanAlloc = 0

c.alloc[spc] = s

}The benefit of using mcache is that memory allocation doesn't require a global lock. However, when its memory is insufficient and it needs to access mcentral, locking is still required.

mcentral

runtime.mcentral manages all mspan storing small objects in the heap. When mcache applies for memory, it's allocated by mcentral.

type mcentral struct {

_ sys.NotInHeap

spanclass spanClass

partial [2]spanSet

full [2]spanSet

}mcentral has few fields. spanClass indicates the stored mspan type. partial and full are two spanSet. The former stores mspan with idle memory, and the latter stores mspan without idle memory. mcentral is directly managed by mheap heap. At runtime, there are a total of 136 mcentral.

type mheap struct {

central [numSpanClasses]struct {

mcentral mcentral

pad [(cpu.CacheLinePadSize - unsafe.Sizeof(mcentral{})%cpu.CacheLinePadSize) % cpu.CacheLinePadSize]byte

}

}mcentral is mainly responsible for two tasks: allocating available mspan to mcache when memory is sufficient, and applying for a new mspan from mheap when memory is insufficient. The work of allocating mspan to mcache is completed by the mcentral.cacheSpan method. First, it tries to find available mspan in the swept set of the idle list:

// Try partial swept spans first.

sg := mheap_.sweepgen

if s = c.partialSwept(sg).pop(); s != nil {

goto havespan

}If not found, it looks for available mspan in the unswept set of the idle list:

for ; spanBudget >= 0; spanBudget-- {

s = c.partialUnswept(sg).pop()

if s == nil {

break

}

if s, ok := sl.tryAcquire(s); ok {

s.sweep(true)

sweep.active.end(sl)

goto havespan

}

}If still not found, it goes to the non-idle list's unswept set to look:

for ; spanBudget >= 0; spanBudget-- {

s = c.fullUnswept(sg).pop()

if s == nil {

break

}

if s, ok := sl.tryAcquire(s); ok {

s.sweep(true)

freeIndex := s.nextFreeIndex()

if freeIndex != s.nelems {

s.freeindex = freeIndex

sweep.active.end(sl)

goto havespan

}

c.fullSwept(sg).push(s.mspan)

}

}If still not found in the end, the mcentral.grow method applies for a new mspan from mheap:

s = c.grow()

if s == nil {

return nil

}Under normal circumstances, an available mspan will be returned one way or another:

havespan:

freeByteBase := s.freeindex &^ (64 - 1)

whichByte := freeByteBase / 8

// Init alloc bits cache.

s.refillAllocCache(whichByte)

s.allocCache >>= s.freeindex % 64

return sFor the process of applying for mspan from mheap, it actually calls the mheap.alloc method, which returns a new mspan:

func (c *mcentral) grow() *mspan {

npages := uintptr(class_to_allocnpages[c.spanclass.sizeclass()])

size := uintptr(class_to_size[c.spanclass.sizeclass()])

s := mheap_.alloc(npages, c.spanclass)

if s == nil {

return nil

}

n := s.divideByElemSize(npages << _PageShift)

s.limit = s.base() + size*n

s.initHeapBits(false)

return s

}After initializing it properly, it can be allocated to mcache for use.

mheap

runtime.mheap is the manager of Go language heap memory. At runtime, it exists as the global variable runtime.mheap_:

var mheap_ mheapIt manages all created mspan, all mcentral, and all heaparena, as well as many other various allocators. Its simplified structure is as follows:

type mheap struct {

_ sys.NotInHeap

lock mutex

allspans []*mspan // all spans out there

pagesInUse atomic.Uintptr // pages of spans in stats mSpanInUse

pagesSwept atomic.Uint64 // pages swept this cycle

pagesSweptBasis atomic.Uint64 // pagesSwept to use as the origin of the sweep ratio

arenas [1 << arenaL1Bits]*[1 << arenaL2Bits]*heapArena

allArenas []arenaIdx

sweepArenas []arenaIdx

central [numSpanClasses]struct {

mcentral mcentral

pad [(cpu.CacheLinePadSize - unsafe.Sizeof(mcentral{})%cpu.CacheLinePadSize) % cpu.CacheLinePadSize]byte

}

pages pageAlloc // page allocation data structure

spanalloc fixalloc // allocator for span*

cachealloc fixalloc // allocator for mcache*

specialfinalizeralloc fixalloc // allocator for specialfinalizer*

specialprofilealloc fixalloc // allocator for specialprofile*

specialReachableAlloc fixalloc // allocator for specialReachable

specialPinCounterAlloc fixalloc // allocator for specialPinCounter

arenaHintAlloc fixalloc // allocator for arenaHints

}For mheap, there are mainly four tasks to do at runtime:

- Initialize the heap

- Allocate

mspan - Free

mspan - Heap expansion

Let's discuss these four things in order.

Initialization

Heap initialization occurs during the program's bootstrap phase, which is also the scheduler's initialization phase. The call order is:

schedinit() -> mallocinit() -> mheap_.init()During initialization, it's mainly responsible for executing the initialization work of various allocators:

func (h *mheap) init() {

h.spanalloc.init(unsafe.Sizeof(mspan{}), recordspan, unsafe.Pointer(h), &memstats.mspan_sys)

h.cachealloc.init(unsafe.Sizeof(mcache{}), nil, nil, &memstats.mcache_sys)

h.specialfinalizeralloc.init(unsafe.Sizeof(specialfinalizer{}), nil, nil, &memstats.other_sys)

h.specialprofilealloc.init(unsafe.Sizeof(specialprofile{}), nil, nil, &memstats.other_sys)

h.specialReachableAlloc.init(unsafe.Sizeof(specialReachable{}), nil, nil, &memstats.other_sys)

h.specialPinCounterAlloc.init(unsafe.Sizeof(specialPinCounter{}), nil, nil, &memstats.other_sys)

h.arenaHintAlloc.init(unsafe.Sizeof(arenaHint{}), nil, nil, &memstats.other_sys)

h.spanalloc.zero = false

for i := range h.central {

h.central[i].mcentral.init(spanClass(i))

}

h.pages.init(&h.lock, &memstats.gcMiscSys, false)

}This includes the allocator mheap.spanalloc responsible for allocating mspan, the allocator mheap.pages responsible for page allocation, and the initialization of all mcentral.

Allocation

In mheap, mspan allocation is completed by the mheap.allocSpan method:

func (h *mheap) allocSpan(npages uintptr, typ spanAllocType, spanclass spanClass) (s *mspan)If the requested memory is small enough, i.e., satisfies npages < pageCachePages/4, it tries to get an available mspan from the local P's mspan cache without locking. If P's cache is empty, it will first initialize it:

// If the cache is empty, refill it.

if c.empty() {

lock(&h.lock)

*c = h.pages.allocToCache()

unlock(&h.lock)

}Then it gets from P cache, completed by the mheap.tryAllocMSpan method:

pp := gp.m.p.ptr()

if !needPhysPageAlign && pp != nil && npages < pageCachePages/4 {

c := &pp.pcache

base, scav = c.alloc(npages)

if base != 0 {

s = h.tryAllocMSpan()

if s != nil {

goto HaveSpan

}

}

}The code for getting mspan from P cache is as follows. It tries to get the last mspan in the cache:

func (h *mheap) tryAllocMSpan() *mspan {

pp := getg().m.p.ptr()

// If we don't have a p or the cache is empty, we can't do

// anything here.

if pp == nil || pp.mspancache.len == 0 {

return nil

}

// Pull off the last entry in the cache.

s := pp.mspancache.buf[pp.mspancache.len-1]

pp.mspancache.len--

return s

}If the requested memory is relatively large, it will be allocated on the heap. This process requires holding a lock:

lock(&h.lock)

if base == 0 {

// Try to acquire a base address.

base, scav = h.pages.alloc(npages)

if base == 0 {

var ok bool

growth, ok = h.grow(npages)

if !ok {

unlock(&h.lock)

return nil

}

base, scav = h.pages.alloc(npages)

if base == 0 {

throw("grew heap, but no adequate free space found")

}

}

}

if s == nil {

// We failed to get an mspan earlier, so grab

// one now that we have the heap lock.

s = h.allocMSpanLocked()

}

unlock(&h.lock)First, it uses pageAlloc.alloc to allocate enough page memory. If heap memory is insufficient, mheap.grow performs expansion. After page memory allocation is completed, the linked allocator mheap.spanalloc allocates 64 mspan to P's local cache (64 is exactly half the cache array length), then returns an available mspan from P's cache:

func (h *mheap) allocMSpanLocked() *mspan {

assertLockHeld(&h.lock)

pp := getg().m.p.ptr()

if pp == nil {

// We don't have a p so just do the normal thing.

return (*mspan)(h.spanalloc.alloc())

}

// Refill the cache if necessary.

if pp.mspancache.len == 0 {

const refillCount = len(pp.mspancache.buf) / 2

for i := 0; i < refillCount; i++ {

pp.mspancache.buf[i] = (*mspan)(h.spanalloc.alloc())

}

pp.mspancache.len = refillCount

}

// Pull off the last entry in the cache.

s := pp.mspancache.buf[pp.mspancache.len-1]

pp.mspancache.len--

return s

}According to the above two situations, an available mspan can ultimately be obtained. Finally, after initializing mspan, it can be returned:

HaveSpan:

h.initSpan(s, typ, spanclass, base, npages)

return sFree

Since mspan is allocated by the linked allocator, naturally when freeing memory, it's also released by it:

func (h *mheap) freeSpanLocked(s *mspan, typ spanAllocType) {

assertLockHeld(&h.lock)

// Mark the space as free.

h.pages.free(s.base(), s.npages)

s.state.set(mSpanDead)

h.freeMSpanLocked(s)

}First, it marks the specified page memory as released through the page allocator mheap.pages, then sets mspan's state to mSpanDead, and finally the mheap.spanalloc allocator releases mspan:

func (h *mheap) freeMSpanLocked(s *mspan) {

assertLockHeld(&h.lock)

pp := getg().m.p.ptr()

// First try to free the mspan directly to the cache.

if pp != nil && pp.mspancache.len < len(pp.mspancache.buf) {

pp.mspancache.buf[pp.mspancache.len] = s

pp.mspancache.len++

return

}

// Failing that (or if we don't have a p), just free it to

// the heap.

h.spanalloc.free(unsafe.Pointer(s))

}If P cache is not full, it will be put into P's local cache to continue use. Otherwise, it will be freed back to heap memory.

Expansion

The page memory space managed by heaparena is not all allocated at the beginning. It's only allocated when memory is needed. The one responsible for expanding heap memory is the mheap.grow method. Below is simplified code:

func (h *mheap) grow(npage uintptr) (uintptr, bool) {

assertLockHeld(&h.lock)

ask := alignUp(npage, pallocChunkPages) * pageSize

totalGrowth := uintptr(0)

end := h.curArena.base + ask

nBase := alignUp(end, physPageSize)

if nBase > h.curArena.end || end < h.curArena.base {

av, asize := h.sysAlloc(ask, &h.arenaHints, true)

if uintptr(av) == h.curArena.end {

h.curArena.end = uintptr(av) + asize

} else {

// Switch to the new space.

h.curArena.base = uintptr(av)

h.curArena.end = uintptr(av) + asize

}

nBase = alignUp(h.curArena.base+ask, physPageSize)

}

...

}It first calculates the required memory based on npage and aligns it, then determines whether the current heaparena has enough memory. If not enough, mheap.sysAlloc applies for more memory for the current heaparena or allocates a new heaparena:

func (h *mheap) sysAlloc(n uintptr, hintList **arenaHint, register bool) (v unsafe.Pointer, size uintptr) {

n = alignUp(n, heapArenaBytes)

if hintList == &h.arenaHints {

v = h.arena.alloc(n, heapArenaBytes, &gcController.heapReleased)

if v != nil {

size = n

goto mapped

}

}

...

}First, it tries to use the linear allocator mheap.arena to apply for a piece of memory in the pre-allocated memory space. If it fails, it expands based on hintList. hintList's type is runtime.arenaHint, which specifically records address information related to heaparena expansion:

for *hintList != nil {

hint := *hintList

p := hint.addr

v = sysReserve(unsafe.Pointer(p), n)

if p == uintptr(v) {

hint.addr = p

size = n

break

}

if v != nil {

sysFreeOS(v, n)

}

*hintList = hint.next

h.arenaHintAlloc.free(unsafe.Pointer(hint))

}After memory application is completed, it's updated to the arenas two-dimensional array:

for ri := arenaIndex(uintptr(v)); ri <= arenaIndex(uintptr(v)+size-1); ri++ {

l2 := h.arenas[ri.l1()]

var r *heapArena

r = (*heapArena)(h.heapArenaAlloc.alloc(unsafe.Sizeof(*r), goarch.PtrSize, &memstats.gcMiscSys))

atomic.StorepNoWB(unsafe.Pointer(&l2[ri.l2()]), unsafe.Pointer(r))

}Finally, the page allocator marks this piece of memory as ready:

// Update the page allocator's structures to make this

// space ready for allocation.

h.pages.grow(v, nBase-v)

totalGrowth += nBase - vObject Allocation

When Go allocates memory for objects, it divides them into three different types based on size:

- Tiny objects - tiny, less than 16B

- Small objects - small, less than 32KB

- Large objects - large, greater than 32KB

According to these three different types, different logic is executed during memory allocation. The function responsible for allocating object memory is runtime.mallocgc, with the following function signature:

func mallocgc(size uintptr, typ *_type, needzero bool) unsafe.PointerIt has only three parameters: memory size, type, and a boolean value indicating whether memory needs to be cleared. It's the entry function for all Go object memory allocation. When using the new function to create pointers, it also enters this function. When memory allocation succeeds, the returned pointer is the object's address. In the mspan section, it was mentioned that each mspan has a spanClass. spanClass determines mspan's fixed size. Go divides the range from [0, 32KB] into 68 different sizes. So Go memory is composed of several mspan linked lists of different fixed sizes. When allocating object memory, just calculate the corresponding spanClass according to object size, then find the corresponding mspan linked list according to spanClass, and finally look for available mspan from the linked list. This hierarchical approach can more effectively solve the memory fragmentation problem.

Tiny Objects

All non-pointer tiny objects less than 16B are allocated to the same contiguous memory by the tiny allocator in P. In runtime.mcache, the tiny field records the base address of this memory:

type mcache struct {

tiny uintptr

tinyoffset uintptr

tinyAllocs uintptr

}The size of tiny objects is determined by the runtime.maxTinySize constant, which is 16B. The memory block used to store tiny objects is also this size. Generally, objects stored here are some small strings. The part of code responsible for allocating tiny objects is as follows:

if size <= maxSmallSize {

if noscan && size < maxTinySize {

off := c.tinyoffset

if off+size <= maxTinySize && c.tiny != 0 {

x = unsafe.Pointer(c.tiny + off)

c.tinyoffset = off + size

c.tinyAllocs++

mp.mallocing = 0

releasem(mp)

return x

}

// Allocate a new maxTinySize block.

span = c.alloc[tinySpanClass]

v := nextFreeFast(span)

if v == 0 {

v, span, shouldhelpgc = c.nextFree(tinySpanClass)

}

x = unsafe.Pointer(v)

(*[2]uint64)(x)[0] = 0

(*[2]uint64)(x)[1] = 0

if (size < c.tinyoffset || c.tiny == 0) {

c.tiny = uintptr(x)

c.tinyoffset = size

}

size = maxTinySizeIf the current tiny memory block has enough space to accommodate, i.e., off+size <= maxTinySize, it directly uses the current memory block. If not enough, it first tries to get available space from mcache's span cache. If that doesn't work either, it applies for an mspan from mcentral. Regardless, it will ultimately get an available address, and finally replace the old with the new tiny object memory block.

Small Objects

Most objects in Go language runtime are small objects in the range [16B, 32KB]. Small object allocation process is the most troublesome, but the code is the least. The part of code responsible for small object allocation is as follows:

var sizeclass uint8

if size <= smallSizeMax-8 {

sizeclass = size_to_class8[divRoundUp(size, smallSizeDiv)]

} else {

sizeclass = size_to_class128[divRoundUp(size-smallSizeMax, largeSizeDiv)]

}

size = uintptr(class_to_size[sizeclass])

spc := makeSpanClass(sizeclass, noscan)

span = c.alloc[spc]

v := nextFreeFast(span)

if v == 0 {

v, span, shouldhelpgc = c.nextFree(spc)

}

x = unsafe.Pointer(v)

if needzero && span.needzero != 0 {

memclrNoHeapPointers(x, size)

}First, it calculates which spanClass category should be used based on the object's size. Then runtime.nextFreeFast tries to get available memory space from the corresponding cache mspan in mcache according to spanClass:

func nextFreeFast(s *mspan) gclinkptr {

theBit := sys.TrailingZeros64(s.allocCache) // Is there a free object in the allocCache?

if theBit < 64 {

result := s.freeindex + uintptr(theBit)

if result < s.nelems {

freeidx := result + 1

if freeidx%64 == 0 && freeidx != s.nelems {

return 0

}

s.allocCache >>= uint(theBit + 1)

s.freeindex = freeidx

s.allocCount++

return gclinkptr(result*s.elemsize + s.base())

}

}

return 0

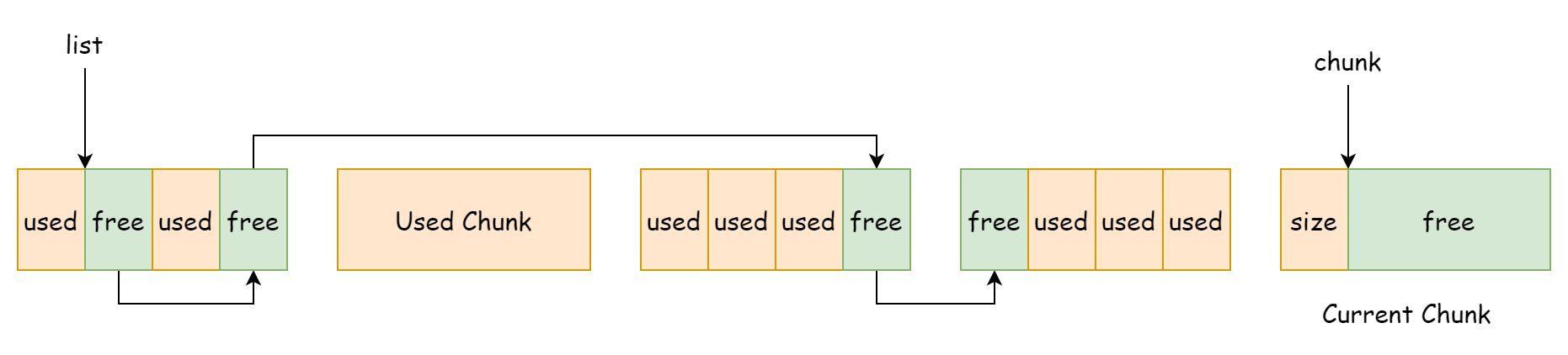

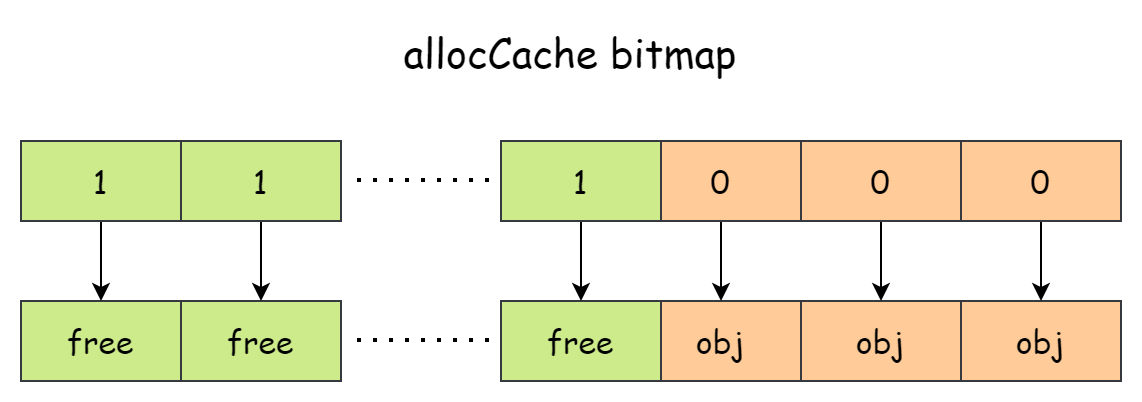

}mspan.allocCache's role is to record whether memory space is used by objects. It divides memory one by one according to object count rather than by space size. This is equivalent to viewing mspan as an object array, as shown in the figure below.

allocCache is a 64-bit number. Each bit corresponds to a piece of memory space. If a bit is 0, it indicates the memory is used by an object. If it's 1, it indicates the memory is idle. sys.TrailingZeros64(s.allocCache)'s purpose is to calculate the number of trailing zeros. If the result is 64, it indicates there's no idle memory available. If there is, it calculates the idle memory offset, adds mspan's base address, and returns.

When mcache doesn't have enough space, it goes to mcentral to apply. This work is completed by the mcache.nextFree method:

func (c *mcache) nextFree(spc spanClass) (v gclinkptr, s *mspan, shouldhelpgc bool) {

s = c.alloc[spc]

shouldhelpgc = false

freeIndex := s.nextFreeIndex()

if freeIndex == s.nelems {

c.refill(spc)

shouldhelpgc = true

s = c.alloc[spc]

freeIndex = s.nextFreeIndex()

}

v = gclinkptr(freeIndex*s.elemsize + s.base())

s.allocCount++

return

}The mcache.refill in it is responsible for applying for an available mspan from mcentral:

func (c *mcache) refill(spc spanClass) {

...

s = mheap_.central[spc].mcentral.cacheSpan()

...

}The mcentral.cacheSpan method performs expansion via mcentral.grow when memory is insufficient. Expansion applies for new mspan from mheap:

func (c *mcentral) grow() *mspan {

...

s := mheap_.alloc(npages, c.spanclass)

...

return s

}So in the end, small object memory allocation goes level by level: first mcache, then mcentral, and finally mheap. mcache allocation cost is the lowest because it's P's local cache, and allocating memory doesn't require holding a lock. mcentral is next. Directly applying for memory from mheap has the highest cost because the mheap.alloc method competes for the entire heap's global lock.

Large Objects

Large object allocation is the simplest. If the object size exceeds 32KB, it directly applies for a new mspan from mheap to accommodate. The part of code responsible for large object allocation is as follows:

shouldhelpgc = true

span = c.allocLarge(size, noscan)

span.freeindex = 1

span.allocCount = 1

size = span.elemsize

x = unsafe.Pointer(span.base())

if needzero && span.needzero != 0 {

if noscan {

delayedZeroing = true

} else {

memclrNoHeapPointers(x, size)

}

}Among them, mcache.allocLarge is responsible for applying for large object memory space from mheap:

func (c *mcache) allocLarge(size uintptr, noscan bool) *mspan {

...

spc := makeSpanClass(0, noscan)

s := mheap_.alloc(npages, spc)

...

return s

}From the code, it can be seen that large objects use spanClass value of 0. Basically, one large object occupies one mspan.

Others

Memory Statistics

Go runtime exposes a function ReadMemStats to users, which can be used to统计 runtime memory conditions:

func ReadMemStats(m *MemStats) {

_ = m.Alloc // nil check test before we switch stacks, see issue 61158

stopTheWorld(stwReadMemStats)

systemstack(func() {

readmemstats_m(m)

})

startTheWorld()

}But using it comes at a very high cost. As can be seen from the code, STW is required before analyzing memory conditions. STW duration may range from a few milliseconds to hundreds of milliseconds. It's generally only used during debugging and troubleshooting. The runtime.MemStats structure records information related to heap memory, stack memory, and GC:

type MemStats struct {

// Overall statistics

Alloc uint64

TotalAlloc uint64

Sys uint64

Lookups uint64

Mallocs uint64

Frees uint64

// Heap memory statistics

HeapAlloc uint64

HeapSys uint64

HeapIdle uint64

HeapInuse uint64

HeapReleased uint64

HeapObjects uint64

// Stack memory statistics

StackInuse uint64

StackSys uint64

// Memory component statistics

MSpanInuse uint64

MSpanSys uint64

MCacheInuse uint64

MCacheSys uint64

BuckHashSys uint64

// GC related statistics

GCSys uint64

OtherSys uint64

NextGC uint64

LastGC uint64

PauseTotalNs uint64

PauseNs [256]uint64

PauseEnd [256]uint64

NumGC uint32

NumForcedGC uint32

GCCPUFraction float64

EnableGC bool

DebugGC bool

BySize [61]struct {

Size uint32

Mallocs uint64

Frees uint64

}

}NotInHeap

The memory allocator is obviously used to allocate heap memory, but the heap is divided into two parts: one part is the heap memory needed by Go runtime itself, and the other part is the heap memory open to users. So in some structures, you can see such embedded fields:

_ sys.NotInHeapThis indicates that the type's memory will not be allocated on the user heap. This kind of embedded field is especially common in memory allocation components, such as the structure representing the user heap runtime.mheap:

type mheap struct {

_ sys.NotInHeap

}The real role of sys.NotInHeap is to avoid memory barriers to improve runtime efficiency, while the user heap needs to run GC so it needs memory barriers.